AMD To Host AI and Data Center Event on June 13th - MI300 Details Inbound?

by Ryan Smith on May 9, 2023 12:45 PM EST

In a brief note posted to its investor relations portal this morning, AMD has announced that they will be holding a special AI and data center-centric event on June 13th. Dubbed the “AMD Data Center and AI Technology Premiere”, the live event is slated to be hosted by CEO Dr. Lisa Su, and will be focusing on AMD’s AI and data center product portfolios – with a particular spotlight on AMD’s expanded product portfolio and plans for growing out these market segments.

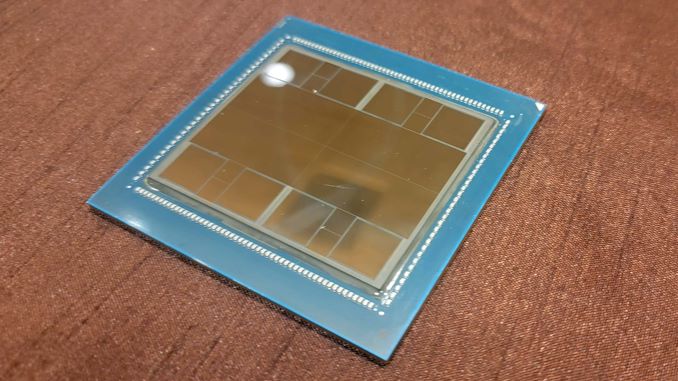

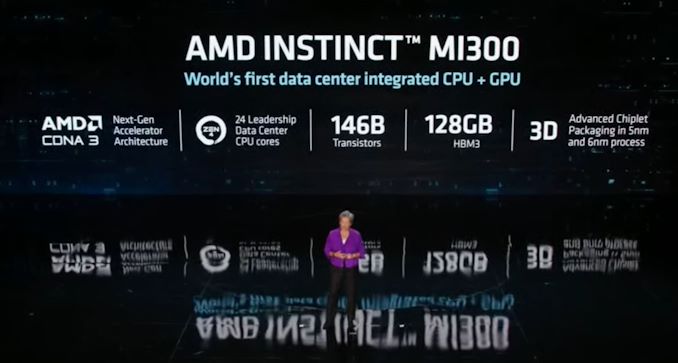

The very brief announcement doesn’t offer any further details on what content to expect. However, the very nature of the event points a clear arrow at AMD’s forthcoming Instinct Mi300 accelerator. MI300 is AMD’s first shot at building a true data center/HPC-class APU, combining the best of AMD’s CPU and GPU technologies. AMD has offered only a handful of technical details about MI300 thus far – we know it’s a disaggregated design, using multiple chiplets built on TSMC’s 5nm process, and using 3D die stacking to place them over a base die – and with MI300 slated to ship this year, AMD will need to fill in the blanks as the product gets closer to launch.

As we noted in last week’s AMD earnings report, AMD’s major investors have been waiting with baited breath for additional details on the accelerator. Simply put, investors are treating data center AI accelerators as the next major growth opportunity for high-performance silicon – eyeing the high margins these products have afforded over at NVIDIA and other AI-adjacent rivals – so there is a lot of pressure on AMD to claim a slice of what’s expected to be a highly profitable pie. MI300 is a product that has been in the works for years, so the pressure is more of a reaction to the money than the silicon itself, but still, MI300 is expected to be AMD’s best opportunity yet to capture a meaningful portion of the data center GPU market.

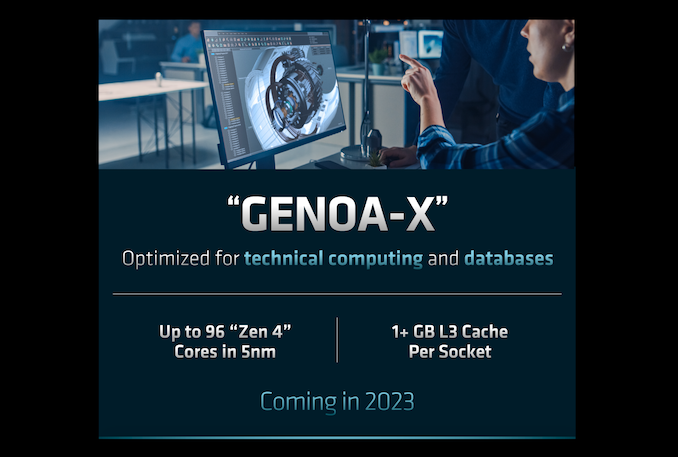

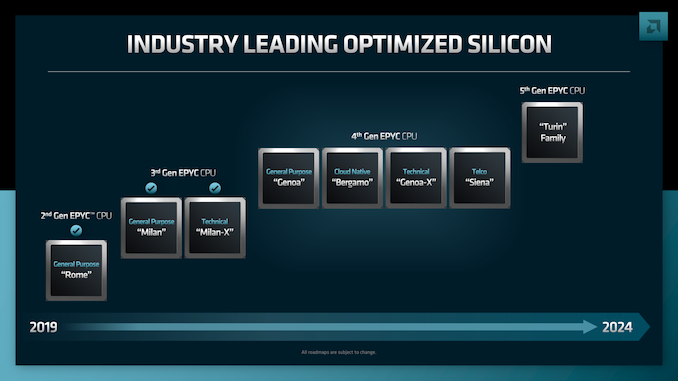

MI300 aside, given the dual AI and data center focus of the event, this is also where we’re likely to see more details on AMD’s forthcoming EPYC “Genoa-X” CPUs. The L3 V-Cache-equipped version of AMD’s current-generation EPYC 9004 series Genoa CPUs, Genoa-X has been on AMD’s roadmap for a while. And with their consumer equivalent parts already shipping (Ryzen 7000X3D), AMD should be nearing completion of the EPYC parts. AMD has previously confirmed that Genoa-X will ship with up to 96 CPU cores, with over 1GB in total L3 cache available on the chip to further boost performance on workloads that benefit from the extra cache.

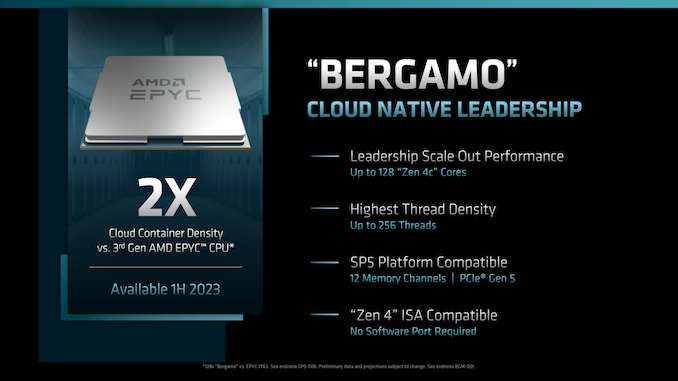

AMD’s ultra-dense EPYC Bergamo chip is also in the pipeline, though given the high-performance aspects of the presentation, it’s a little more questionable whether it will be at the show. Based on AMD’s compacted Zen4c architecture, Bergamo is aimed at cloud service providers who need lots of cores to split up amongst customers, with up to 128 CPU cores on a single Bergamo chip. Like Genoa-X, Bergamo is slated to launch this year, so further details about it should come to light sooner than later.

But whatever AMD does (or doesn’t) show at their event, we’ll find out on June 13th at 10am PT (17:00 UTC). AMD will be live streaming the event from their website as well as YouTube.

Source: AMD

2 Comments

View All Comments

Threska - Tuesday, May 9, 2023 - link

Be nice to see what they do with their Xilinx acquisition.brucethemoose - Wednesday, May 10, 2023 - link

Does this mean the the MI300 is now a high volume part instead of an HPC niche chip?The way training/inference is so consolidated around A100s now is almost dystopian. Sometimes you find a JAX repo that works on Google TPUs (which are overpriced anyway). But other than that, from the perspective of a hobbyist, its like vendors outside Nvidia dont exist. So I hope AMD (and Intel) can break that up a little by repurposing these things.