OpenCAPI to Fold into CXL - CXL Set to Become Dominant CPU Interconnect Standard

by Ryan Smith on August 1, 2022 5:00 PM EST- Posted in

- CPUs

- Memory

- OpenCAPI

- CXL

- Flash Memory Summit 2022

With the 2022 Flash Memory Summit taking place this week, not only is there a slew of solid-state storage announcements in the pipe over the coming days, but the show is also increasingly a popular venue for discussing I/O and interconnect developments as well. Kicking things off on that front, this afternoon the OpenCAPI and CXL consortiums are issuing a joint announcement that the two groups will be joining forces, with the OpenCAPI standard and the consortium’s assets being transferred to the CXL consortium. With this integration, CXL is set to become the dominant CPU-to-device interconnect standard, as virtually all major manufacturers are now backing the standard, and competing standards have bowed out of the race and been absorbed by CXL.

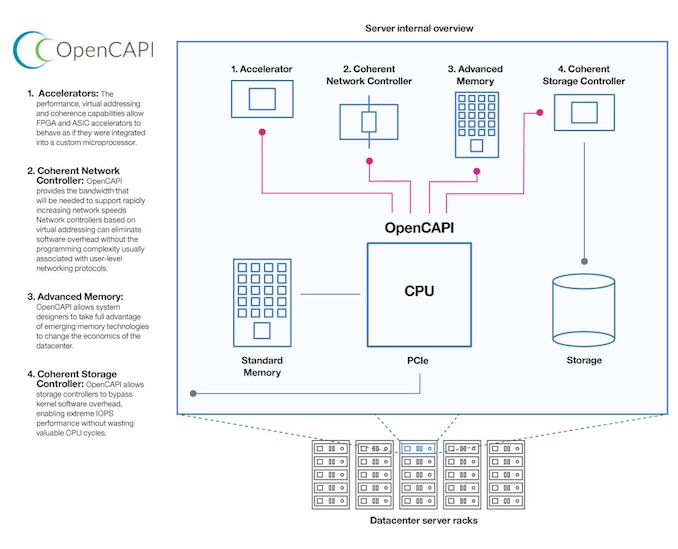

Pre-dating CXL by a few years, OpenCAPI was one of the earlier standards for a cache-coherent CPU interconnect. The standard, backed by AMD, Xilinx, and IBM, among others, was an extension of IBM’s existing Coherent Accelerator Processor Interface (CAPI) technology, opening it up to the rest of the industry and placing its control under an industry consortium. In the last six years, OpenCAPI has seen a modest amount of use, most notably being implemented in IBM’s POWER9 processor family. Like similar CPU-to-device interconnect standards, OpenCAPI was essentially an application extension on top of existing high speed I/O standards, adding things like cache-coherency and faster (lower latency) access modes so that CPUs and accelerators could work together more closely despite their physical disaggregation.

But, as one of several competing standards tackling this problem, OpenCAPI never quite caught fire in the industry. Born from IBM, IBM was its biggest user at a time when IBM’s share in the server space has been on the decline. And even consortium members on the rise, such as AMD, ended up skipping on the technology, leveraging their own Infinity Fabric architecture for AMD server CPU/GPU connectivity, for example. This has left OpenCAPI without a strong champion – and without a sizable userbase to keep things moving forward.

Ultimately, the desire of the wider industry to consolidate behind a single interconnect standard – for the sake of both manufacturers and customers - has brought the interconnect wars to a head. And with Compute Express Link (CXL) quickly becoming the clear winner, the OpenCAPI consortium is becoming the latest interconnect standards body to bow out and become absorbed by CXL.

Under the terms of the proposed deal – pending approval by the necessary parties – the OpenCAPI consortium’s assets and standards will be transferred to the CXL consortium. This would include all of the relevant technology from OpenCAPI, as well as the group’s lesser-known Open Memory Interface (OMI) standard, which allowed for attaching DRAM to a system over OpenCAPI’s physical bus. In essence, the CXL consortium would be absorbing OpenCAPI; and while they won’t be continuing its development for obvious reasons, the transfer means that any useful technologies from OpenCAPI could be integrated into future versions of CXL, strengthening the overall ecosystem.

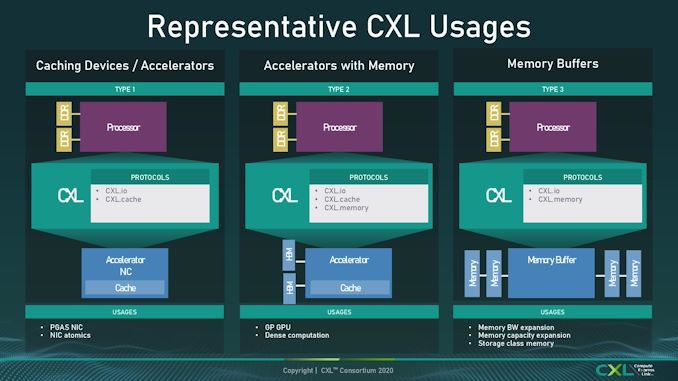

With the sublimation of OpenCAPI into CXL, this leaves the Intel-backed standard as dominant interconnect standard – and the de facto standard for the industry going forward. The competing Gen-Z standard was similarly absorbed into CXL earlier this year, and the CCIX standard has been left behind, with its major backers joining the CXL consortium in recent years. So even with the first CXL-enabled CPUs not shipping quite yet, at this point CXL has cleared the neighborhood, as it were, becoming the sole remaining server CPU interconnect standard for everything from accelerator I/O (CXL.io) to memory expansion over the PCIe bus.

Source: OpenCAPI Press Release

7 Comments

View All Comments

Kamen Rider Blade - Tuesday, August 2, 2022 - link

"There can be only one!"domboy - Tuesday, August 2, 2022 - link

"And he does not share power."samlebon2306 - Thursday, August 4, 2022 - link

Yep, the Oracle was rightWereweeb - Tuesday, August 2, 2022 - link

Can CXL even match OMI for near-memory applications? (In terms of latency, naturally)I know IBM claims OMI's on-DIMM controller only adds some 4ns of latency vs your typical DDR (5?) DIMM. What about CXL? I'd really like to see a standard that could in the future help replace serial DDR.

If they made DRAM that uses the PCIe phy to communicate with the CPU, we could see much more flexible setups where the same CPU can be set up either for many accelerators or just a ridiculous amount of memory bandwidth - without having to rely on HBM. More I/O per dollar as less pins are required for most consumers. PCIe 6.0 is expensive but if you're already making motherboards that support it you might as well go all out.

Kamen Rider Blade - Wednesday, August 3, 2022 - link

I don't think CXL was designed for DIMM's.That's why I'm excited that OMI connected to a On-DIMM controller has a chance to be pushed to JEDEC for standardization.

acantle - Wednesday, August 3, 2022 - link

Good observations Wereweeb. I gave a presentation, link below, titled "Power and Latency Analysis of the Memory Tiering Pyramid" at FMS yesterday that clearly articulates how our industry now has the potential significantly benefit from OMI & CXL being under one roof!https://youtu.be/Qc1BVlsYwvM

avariciousafrican - Thursday, August 4, 2022 - link

Good observations Wereweeb.