FMS 2019: EDSFF Form Factors Update - Long, Short, & Everything In-Between

by Billy Tallis on August 16, 2019 9:15 AM EST

What began as Intel's Ruler concept for a new SSD form factor blossomed into the Enterprise and Datacenter Small Form Factor family of standards, with the version 1.0 specs published in early 2018. Those standards have since been put into production by enough vendors to generate a lot of real-world feedback, and it's starting to become clear how the numerous options will fare in the long run. At Flash Memory Summit several companies shared their experiences with the new form factors and explained the motivation behind the changes that have been made to the original EDSFF specs.

EDSFF 3" module next to non-standard 2.5" drive with EDSFF connector

The 3" EDSFF form factors (two lengths and two thicknesses) are the most similar to the existing 2.5" drive form factor, and can be accommodated without overhauling a server's layout. These 3" form factors also seem to be attracting the least interest, and if they see widespread use it will primarily be in more traditional enterprise 2U or 3U server applications where they can take the place of U.2 drives and also replace some uses for PCIe add-in cards. The SFF-TA-1008 spec for the 3" form factors is still sitting at version 1.0, published in March 2018. The most interesting EDSFF 3" device we ran across at FMS this year was a Gen-Z DDR4 memory module.

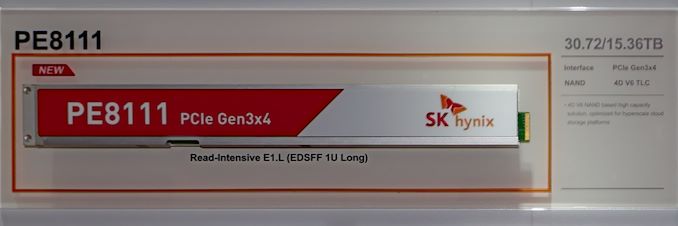

Hyperscalers are much more interested in the benefits of the 1U form factors. The EDSFF 1U Long (abbreviated E1.L) form factor is most similar to Intel's original Ruler concept, and seems best suited for high-density storage. For example, Microsoft Azure is using the 18mm thick E1.L form factor in their Olympus FX-16 1U JBOF, with 16 drives of 16TB each (256TB total) in a 1U OCP chassis that also has PCIe cabling on the front panel. This is pretty much the least dense use case for E1.L drives—the thinner 9.5mm version of E1.L almost doubles the density, at the cost of making cooling more of a challenge.

E1.S bare PCB

The EDSFF 1U Short (E1.S) form factor is shaping up to be more of a general-purpose standard, and has seen the most changes. E1.S was originally conceived as a fairly direct replacement for M.2 that addresses the most important shortcomings of M.2 for datacenter usage: E1.S is a bit wider so that two rows of NAND flash packages can fit on the PCB, it's hot-swappable, and it can accommodate higher power levels thanks to a 12V supply and easier cooling. (This is also the basic summary for Samsung's competing NF1 form factor.) The E1.S spec originally defined just the PCB dimensions, leaving it up to the vendor to design an appropriate carrier tray or case. In this bare PCB configuration, the maximum power draw was specified as 12W.

E1.S with heatspreader

Demand for even higher power use cases has resulted in the definition of standard case and heatsink sizes for E1.S. For cards up to 20W, a 9.5mm thick symmetrical case/heatspreader can be used, making E1.S much more like a shortened version of the fully-enclosed E1.L. For even higher power applications, an asymmetrical case with heatsink has been designed, bringing the thickness all the way up to 25mm and allowing for 25W. The 9.5mm symmetrical case will be adequate for most SSD use cases. The 25mm asymmetrical case+heatsink is intended more for non-SSD devices such as accelerators that dissipate most of their power from a single large chip. These new options allow E1.S to support the same power (and performance) levels possible with 2.5" U.2 drives, while being significantly easier to cool.

E1.S FPGA Accelerator bare PCB and full case+heatsink

To accommodate these high-power devices, the E1.S form factor now also allows for 8 PCIe lanes using one of the longer connector variants that was already permitted for E1.L and the 3" form factors. Several vendors at Flash Memory Summit were showing off devices using the E1.S form factor with the asymmetrical heatsink: a mix of SSDs and accelerators.

1 Comments

View All Comments

shelbystripes - Friday, August 16, 2019 - link

Interesting how the 8x bandwidth and 25W power are enabling uses beyond SSDs now. Could let you make use of all those PCIe lanes Epyc offers, even in a 1U system...