NVIDIA G-Sync Review

by Anand Lal Shimpi on December 12, 2013 9:00 AM ESTHow it Plays

The requirements for G-Sync are straightforward. You need a G-Sync enabled display (in this case the modified ASUS VG248QE is the only one “available”, more on this later). You need a GeForce GTX 650 Ti Boost or better with a DisplayPort connector. You need a DP 1.2 cable, a game capable of running in full screen mode (G-Sync reverts to V-Sync if you run in a window) and you need Windows 7 or 8.1.

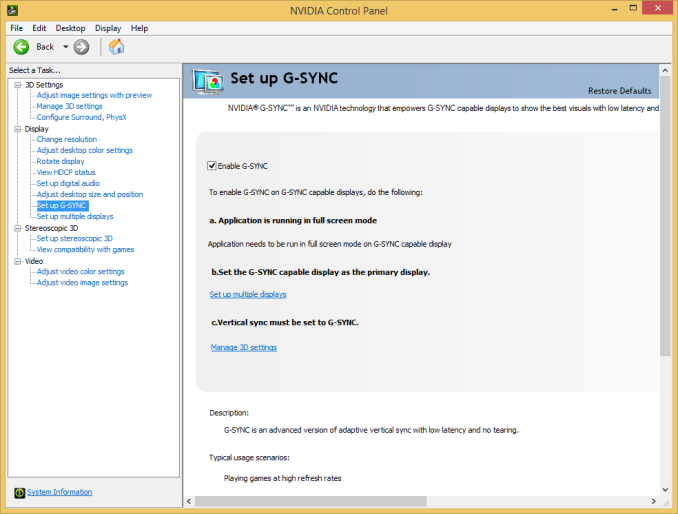

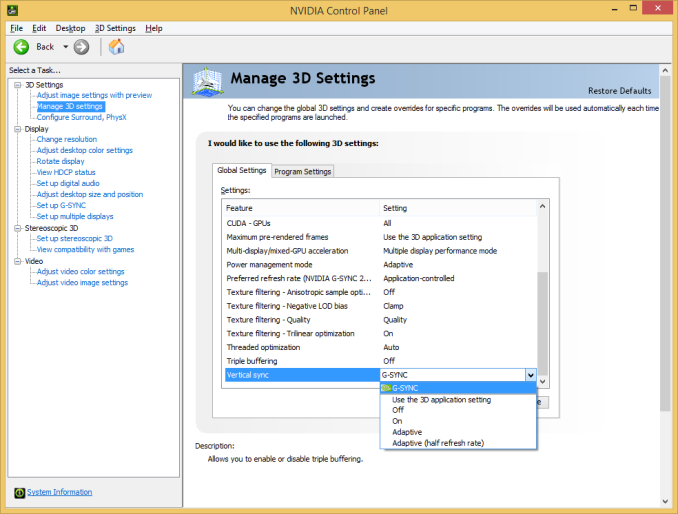

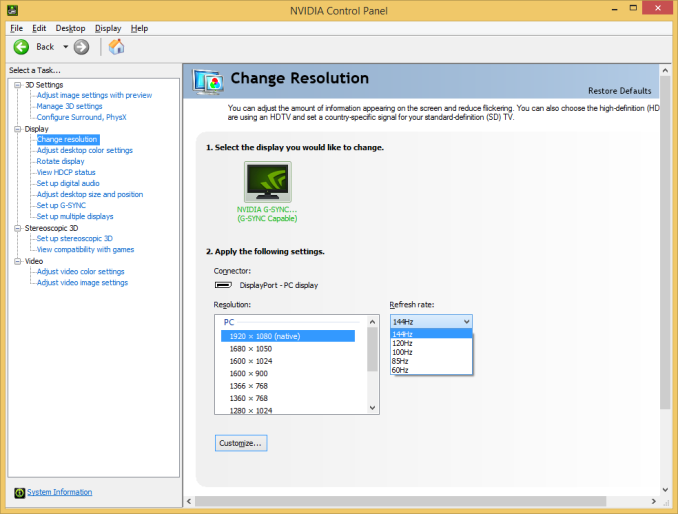

G-Sync enabled drivers are already available at GeForce.com (R331.93). Once you’ve met all of the requirements you’ll see the appropriate G-Sync toggles in NVIDIA’s control panel. Even with G-Sync on you can still control the display’s refresh rate. To maximize the impact of G-Sync NVIDIA’s reviewer’s guide recommends testing v-sync on/off at 60Hz but G-Sync at 144Hz. For the sake of not being silly I ran all of my comparisons at 60Hz or 144Hz, and never mixed the two, in order to isolate the impact of G-Sync alone.

NVIDIA sampled the same pendulum demo it used in Montreal a couple of months ago to demonstrate G-Sync, but I spent the vast majority of my time with the G-Sync display playing actual games.

I’ve been using Falcon NW’s Tiki system for any experiential testing ever since it showed up with NVIDIA’s Titan earlier this year. Naturally that’s where I started with the G-Sync display. Unfortunately the combination didn’t fare all that well, with the system exhibiting hard locks and very low in-game frame rates with the G-Sync display attached. I didn’t have enough time to further debug the setup and plan on shipping NVIDIA the system as soon as possible to see if they can find the root cause of the problem. Switching to a Z87 testbed with an EVGA GeForce GTX 760 proved to be totally problem-free with the G-Sync display thankfully enough.

At a high level the sweet spot for G-Sync is going to be a situation where you have a frame rate that regularly varies between 30 and 60 fps. Game/hardware/settings combinations that result in frame rates below 30 fps will exhibit stuttering since the G-Sync display will be forced to repeat frames, and similarly if your frame rate is equal to your refresh rate (60, 120 or 144 fps in this case) then you won’t really see any advantages over plain old v-sync.

I've put together a quick 4K video showing v-sync off, v-sync on and G-Sync on, all at 60Hz, while running Bioshock Infinite on my GTX 760 testbed. I captured each video at 720p60 and put them all side by side (thus making up the 3840 pixel width of the video). I slowed the video down by 50% in order to better demonstrate the impact of each setting. The biggest differences tend to be at the very beginning of the video. You'll see tons of tearing with v-sync off, some stutter with v-sync on, and a much smoother overall experience with G-Sync on.

While the comparison above does a great job showing off the three different modes we tested at 60Hz, I also put together a 2x1 comparison of v-sync and G-Sync to make things even more clear. Here you're just looking for the stuttering on the v-sync setup, particularly at the very beginning of the video:

Assassin’s Creed IV

I started out playing Assassin’s Creed IV multiplayer with v-sync off. I used GeForce Experience to predetermine the game quality settings, which ended up being maxed out even on my GeForce GTX 760 test hardware. With v-sync off and the display set to 60Hz, there was just tons of tearing everywhere. In AC4 the tearing was arguably even worse as it seemed to take place in the upper 40% of the display, dangerously close to where my eyes were focused most of the time. Playing with v-sync off was clearly not an option for me.

Next was to enable v-sync with the refresh rate left at 60Hz. Lots of AC4 renders at 60 fps, although in some scenes both outdoors and indoors I saw frame rates drop down into the 40 - 51 fps range. Here with v-sync enabled I started noticing stuttering, especially as I moved the camera around and the difficulty of what was being rendered varied. In some scenes the stuttering was pretty noticeable. I played through a bunch of rounds with v-sync enabled before enabling G-Sync.

I enabled G-Sync, once again leaving the refresh rate at 60Hz and dove back into the game. I was shocked; virtually all stuttering vanished. I had to keep FRAPS running to remind me of areas where I should be seeing stuttering. The combination of fast enough hardware to keep the frame rate in the G-Sync sweet spot of 40 - 60 fps and the G-Sync display itself produced a level of smoothness that I hadn’t seen before. I actually realized that I was playing Assassin’s Creed IV with an Xbox 360 controller literally two feet away from my PS4 and having a substantially better experience.

Batman: Arkham Origins

Next up on my list was Batman: Arkham Origins. I hadn’t played the past couple of Batman games but they always seemed interesting to me so I was glad to spend some time with this one. Having skipped the previous ones, I obviously didn’t have the repetitive/unoriginal criticisms of the game that some other seemed to have had. Instead I enjoyed its pace and thought it was a decent way to kill some time (or in this case, test a G-Sync display).

Once again I started off with v-sync off with the display set to 60Hz. For a while I didn’t see any tearing, that was until I ended up inside a tower during the second mission of the game. I was panning across a small room and immediately encountered a ridiculous amount of tearing. This was even worse than Assassin’s Creed. What’s interesting about the tearing in Batman was that it really felt more limited in frequency than in AC4’s multiplayer, but when it happened it was substantially worse.

Next up was v-sync on, once again at 60Hz. Here I noticed sharp variations in frame rate resulting in tons of stutter. The stutter was pretty consistent both outdoors (panning across the city) and indoors (while fighting large groups of enemies). I remember seeing the stutter and noting that it was just something I’m used to expecting. Traditionally I’d fight this on a 60Hz panel by lowering quality settings to at least drive for more time at 60 fps. With G-Sync enabled, it turns out I wouldn’t have to.

The improvement to Batman was insane. I kept expecting it to somehow not work, but G-Sync really did smooth out the vast majority of stuttering I encountered in the game - all without touching a single quality setting. You can still see some hiccups, but they are the result of other things (CPU limitations, streaming textures, etc…). That brings up another point about G-Sync: once you remove GPU/display synchronization as a source of stutter, all other visual artifacts become even more obvious. Things like aliasing and texture crawl/shimmer become even more distracting. The good news is you can address those things, often with a faster GPU, which all of the sudden makes the G-Sync play an even smarter one on NVIDIA’s part. Playing with G-Sync enabled raises my expectations for literally all other parts of the visual experience.

Sleeping Dogs

I’ve been wanting to play Sleeping Dogs ever since it came out, and the G-Sync review gave me the opportunity to do just that. I like the premise and the change of scenery compared to the sandbox games I’m used to (read: GTA), and at least thus far I can put up with the not-quite-perfect camera and fairly uninspired driving feel. The bigger story here is that running Sleeping Dogs at max quality settings gave my GTX 760 enough of a workout to really showcase the limits of G-Sync.

With v-sync (60Hz) on I typically saw frame rates around 30 - 45 fps, but there were many situations where the frame rate would drop down to 28 fps. I was really curious to see what the impact of G-Sync was here since below 30 fps G-Sync would repeat frames to maintain a 30Hz refresh on the display itself.

The first thing I noticed after enabling G-Sync is my instantaneous frame rate (according to FRAPS) dropped from 27-28 fps down to 25-26 fps. This is that G-Sync polling overhead I mentioned earlier. Now not only did the frame rate drop, but the display had to start repeating frames, which resulted in a substantially worse experience. The only solution here was to decrease quality settings to get frame rates back up again. I was glad I ran into this situation as it shows that while G-Sync may be a great solution to improve playability, you still need a fast enough GPU to drive the whole thing.

Dota 2 & Starcraft II

The impact of G-Sync can also be reduced at the other end of the spectrum. I tried both Dota 2 and Starcraft II with my GTX 760/G-Sync test system and in both cases I didn’t have a substantially better experience than with v-sync alone. Both games ran well enough on my 1080p testbed to almost always be at 60 fps, which made v-sync and G-Sync interchangeable in terms of experience.

Bioshock Infinite @ 144Hz

Up to this point all of my testing kept the refresh rate stuck at 60Hz. I was curious to see what the impact would be of running everything at 144Hz, so I did just that. This time I turned to Bioshock Infinite, whose integrated benchmark mode is a great test as there’s tons of visible tearing or stuttering depending on whether or not you have v-sync enabled.

Increasing the refresh rate to 144Hz definitely reduced the amount of tearing visible with v-sync disabled. I’d call it a substantial improvement, although not quite perfect. Enabling v-sync at 144Hz got rid of the tearing but still kept a substantial amount of stuttering, particularly at the very beginning of the benchmark loop. Finally, enabling G-Sync fixed almost everything. The G-Sync on scenario was just super smooth with only a few hiccups.

What’s interesting to me about this last situation is if 120/144Hz reduces tearing enough to the point where you’re ok with it, G-Sync may be a solution to a problem you no longer care about. If you’re hyper sensitive to tearing however, there’s still value in G-Sync even at these high refresh rates.

193 Comments

View All Comments

tipoo - Thursday, December 12, 2013 - link

Good to hear it mostly works well, if you can keep the framerate high enough. This with a high end computer and Occulus Rift would be an amazing combination, I hope both take off.smunter6 - Thursday, December 12, 2013 - link

Want to make any wild guesses as to what John Carmack's working on over in Oculus Rift's secret labs?GiantPandaMan - Thursday, December 12, 2013 - link

Except the Oculus Rift probably won't have it. They love non-proprietary stuff and G-Sync lands firmly in the proprietary category.Make it a standard, make it cost about $10 more to implement rather than $120 and this will take off. I don't see this happening, though. NVidia just doesn't operate in that matter, unfortunately. It would make gaming so much better for the people who really need it--those with sub-par video cards.

No display maker is going to make a key component (the scalar) beholden only to a single manufacturer (nVidia). The technology needs to be licensed so it becomes an industry standard so that manufacturers can put it into their displays without having to rely on a single OEM.

psuedonymous - Thursday, December 12, 2013 - link

Carmack himself mentioned at the panel after the G-sync reveal that the first consumer release of the Oculus would NOT contain G-sync, but that is definitely something they want to incorporate.My guess is the reason being the use of LVDS as the sole panel interface. There simply AREN'T any decent 5.6"-6" panels using LVDS. Nobody makes them. The relatively bad (6-bit FRC, crummy colours compared to modern panels, low fill-factor, low resolution, too big to be used efficiently) panel was a compromise in that it was the only one readily available in volume and compatible with the existing LVDS board. Phone/tablet panels in the correct size, resolution and quality range are all MIPI DSI, with the exception of the Retina iPad Mini, which uses an eDP panel like the iPad 3 onwards. Except that panel is still too large, and will be unavailable in volume until Apple decide to reduce their orders in 6 months or so. The current 1080p prototype uses one of the early DVI->MIPI chips (probably on an in-house board) because it's the only way to actually drive the panels available.

GiantPandaMan - Thursday, December 12, 2013 - link

Interesting information. Thanks for posting it.As useful as G-Sync would be for something like Oculus (especially for reducing motion sickness) it's still far too expensive to implement. Oculus, itself, wants to hold the line at $300. There's simply no way for $120 to be cut down into that price.

Then there's the fact that Oculus would benefit far more from 120hz panels than it would be from gsync. Honestly, I can't imagine Carmack or Oculus ever bad mouthing a new technology that it could benefit from in the future, but the fact remains there are so many other things that would be more cost effective for Oculus to do first. Higher resolution, 1920x2160 say; higher refresh rates, 120hz. Personally I hope they think about using some of the projector panels. Their smaller, lighter, and already have both the color depth and refresh rates. The only problem, of course, is they're probably too small and may be too expensive.

psuedonymous - Friday, December 13, 2013 - link

They specifically avoid making a microdisplay-based HMD, because of the tradeoffs that every previous microdisplay HMD has had to make. Because the displays are small, you need some hefty optics to view the image, and these must be complicated in order to correct for distortion (as unlike the large-panel software-corrected approach the Rift uses, distortion with a much smaller display would be so great it could not be effectively corrected). This means the optics are bulky, heavy and expensive. And that goes doubly so if you want a large field-of-view (compare the Oculus 90° horizontal FoV to the HMD-1/2/3's 45° hFoV, and the HMD series were praised for their unusually large FoV compared to competing models). In fact, the only large FoV HMD I know of using microdisplays is the Sensics Pisight (http://sensics.com/head-mounted-displays/technolog... a huge 24 display monster that costs well in excess of $20,000.And anything other than a tristimulus subpixel microdisplay (a tiny transmissive LCD) will have chromatic fringing when you look around due to sequential colour (http://blogs.valvesoftware.com/abrash/why-virtual-...

GiantPandaMan - Friday, December 13, 2013 - link

Ahh, so I guess my fears on using projector panels are true. Damn. I guess we're going to be stuck with 60 hz on the Oculus for awhile. I just don't see phone displays moving up in refresh rates anytime soon.I really want the Pisight now, but, unfortunately, I need to do things like eat and have shelter. :P

JoannWDean - Saturday, December 14, 2013 - link

my buddy's aunt earned 14958 dollar past week. she been working on the laptop and got a 510900 dollar home. All she did was get blessed and put into action the information leaked on this site... http://cpl.pw/OKeIJoBlack Obsidian - Thursday, December 12, 2013 - link

G-Sync seems to live in a very small niche. How many people both:A) Need better performance

*and*

B) Need a new monitor as well

?

Absent those two conditions, aren't people simply better off investing the ~$400 a G-Sync monitor would cost in, you know, a better video card instead? I experience neither tearing nor stuttering, because my absurd triple-slot, factory-overclocked R7970 has no problem pushing any game I play well beyond 60FPS. A special monitor would cost 80% what that card did at launch, so G-Sync seems like a bit of a non-starter to me, unless there's something I'm missing here.

IanCutress - Thursday, December 12, 2013 - link

For the gamer that has it all?I'm interested in G-Sync at 4K. If the need for AA is reduced, and you're battling against 30-60 FPS numbers. But for those users who are in the mid range GPU market, having a good monitor that will last 5-10 years might be cheaper than a large GPU or system upgrade.

It's just another piece in the puzzle towards which will hopefully become standard. Think about it - in an ideal world, shouldn't this have been implemented from the start?