The Intel SSD 660p SSD Review: QLC NAND Arrives For Consumer SSDs

by Billy Tallis on August 7, 2018 11:00 AM ESTPower Management Features

Real-world client storage workloads leave SSDs idle most of the time, so the active power measurements presented earlier in this review only account for a small part of what determines a drive's suitability for battery-powered use. Especially under light use, the power efficiency of a SSD is determined mostly be how well it can save power when idle.

For many NVMe SSDs, the closely related matter of thermal management can also be important. M.2 SSDs can concentrate a lot of power in a very small space. They may also be used in locations with high ambient temperatures and poor cooling, such as tucked under a GPU on a desktop motherboard, or in a poorly-ventilated notebook.

| Intel SSD 660p 1TB NVMe Power and Thermal Management Features |

|||

| Controller | Silicon Motion SM2263 | ||

| Firmware | NHF034C | ||

| NVMe Version |

Feature | Status | |

| 1.0 | Number of operational (active) power states | 3 | |

| 1.1 | Number of non-operational (idle) power states | 2 | |

| Autonomous Power State Transition (APST) | Supported | ||

| 1.2 | Warning Temperature | 77°C | |

| Critical Temperature | 80°C | ||

| 1.3 | Host Controlled Thermal Management | Supported | |

| Non-Operational Power State Permissive Mode | Not Supported | ||

The Intel SSD 660p's power and thermal management feature set is typical for current-generation NVMe SSDs. The rated exit latency from the deepest idle power state is quite a bit faster than what we have measured in practice from this generation of Silicon Motion controllers, but otherwise the drive's claims about its idle states seem realistic.

| Intel SSD 660p 1TB NVMe Power States |

|||||

| Controller | Silicon Motion SM2263 | ||||

| Firmware | NHF034C | ||||

| Power State |

Maximum Power |

Active/Idle | Entry Latency |

Exit Latency |

|

| PS 0 | 4.0 W | Active | - | - | |

| PS 1 | 3.0 W | Active | - | - | |

| PS 2 | 2.2 W | Active | - | - | |

| PS 3 | 30 mW | Idle | 5 ms | 5 ms | |

| PS 4 | 4 mW | Idle | 5 ms | 9 ms | |

Note that the above tables reflect only the information provided by the drive to the OS. The power and latency numbers are often very conservative estimates, but they are what the OS uses to determine which idle states to use and how long to wait before dropping to a deeper idle state.

Idle Power Measurement

SATA SSDs are tested with SATA link power management disabled to measure their active idle power draw, and with it enabled for the deeper idle power consumption score and the idle wake-up latency test. Our testbed, like any ordinary desktop system, cannot trigger the deepest DevSleep idle state.

Idle power management for NVMe SSDs is far more complicated than for SATA SSDs. NVMe SSDs can support several different idle power states, and through the Autonomous Power State Transition (APST) feature the operating system can set a drive's policy for when to drop down to a lower power state. There is typically a tradeoff in that lower-power states take longer to enter and wake up from, so the choice about what power states to use may differ for desktop and notebooks.

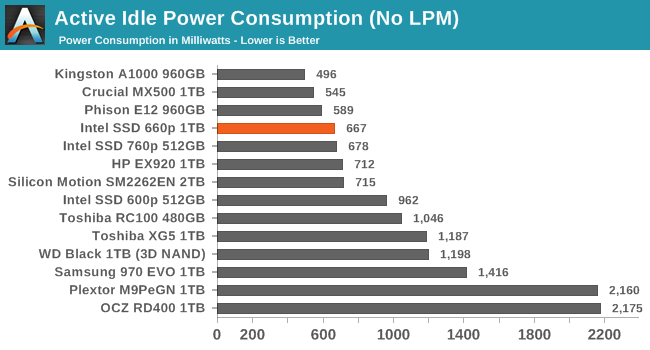

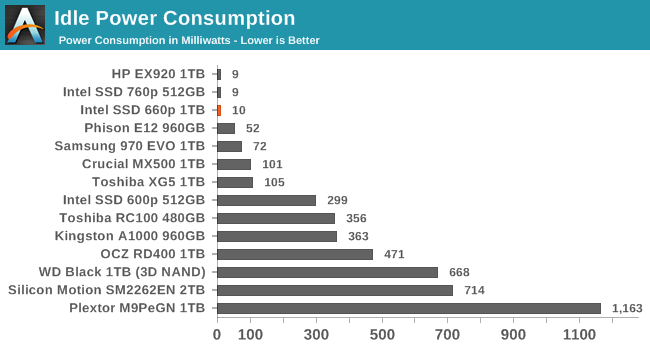

We report two idle power measurements. Active idle is representative of a typical desktop, where none of the advanced PCIe link or NVMe power saving features are enabled and the drive is immediately ready to process new commands. The idle power consumption metric is measured with PCIe Active State Power Management L1.2 state enabled and NVMe APST enabled if supported.

The Intel 660p has a slightly lower active idle power draw than the SM2262-based drives we've tested, thanks to the smaller controller and reduced DRAM capacity. It isn't the lowest active idle power we've measured from a NVMe SSD, but it is definitely better than most high-end NVMe drives. In the deepest idle state our desktop testbed can use, we measure an excellent 10mW draw.

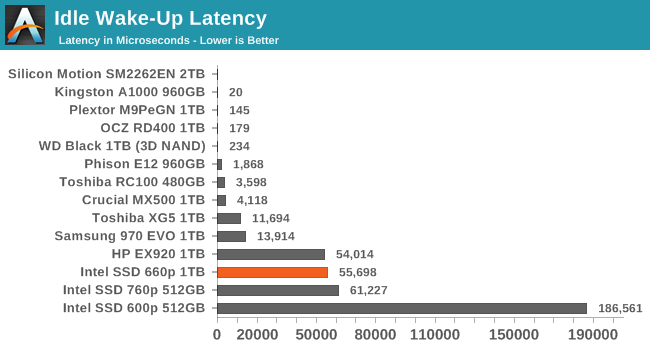

The Intel 660p's idle wake-up time of about 55ms is typical for Silicon Motion's current generation of controllers and much better than their first-generation NVMe controller as used in the Intel SSD 600p. The Phison E12 can wake up in under 2ms from a sleep state of about 52mW, but otherwise the NVMe SSDs that wake up quickly were saving far less power than the 660p's deep idle.

86 Comments

View All Comments

limitedaccess - Tuesday, August 7, 2018 - link

SSD reviewers need to look into testing data retention and related performance loss. Write endurance is misleading.Ryan Smith - Tuesday, August 7, 2018 - link

It's definitely a trust-but-verify situation, and is something we're going to be looking into for the 660p and other early QLC drives.Besides the fact that we only had limited hands-on time with this drive ahead of the embargo and FMS, it's going to take a long time to test the drive's longevity. Even with 24/7 writing, with a sustained 100MB/sec write rate you're looking at only around 8TB written/day. Which means you're looking at weeks or months to exhaust the smallest drive.

eastcoast_pete - Tuesday, August 7, 2018 - link

Hi Ryan and Billie,I second the questions by limitedaccess and npz, also on data retention in cold storage. Now, about Ryan's answer: I don't expect you guys to be able to torture every drive for months on end until it dies, but, is there any way to first test the drive, then run continuous writes/rewrites for seven days non-stop, and then re-do some core tests to see if there are any signs or even hints of deterioration? The issue I have with most tests is that they are all done on virgin drives with zero hours on them, which is a best-case scenario. Any decent drive should be good as new after only 7 days (168 hours) of intensive read/write stress. If it's still as good as when you first tested it, I believe that would bode well for possible longevity. Conversely, if any drive shows even mild deterioration after only a week of intense use, I'd really like to know, so I can stay away.

Any chance for that or something similar?

JoeyJoJo123 - Tuesday, August 7, 2018 - link

>and then re-do some core tests to see if there are any signs or even hints of deterioration?That's not how solid state devices work. They're either working or they're not. And even if they're dead, that's not to say anything that it was indeed the nand flash that deteriorated beyond repair, it could've been the controller or even the port the SSD was connected that got hosed.

Literally testing a single drive says absolutely nothing at all about the expected lifespan of your single drive. This is why mass aggregate reliability ratings from people like Backblaze is important. They buy enough bulk drives that they can actually average out the failure rates and get reasonable real world reliability numbers of the the drives used in hot and vibration-prone server rack environments.

Anandtech could test one drive and say "Well it worked when we first plugged it in, and when we rebooted, the review sample we got no longer worked. I guess it was a bad sample" or "Well, we stress tested it for 4 weeks under a constant mixed read/write load, and the SMART readings show that everything is absolutely perfect, we can extrapolate that no drive of this particular series will never _ever_ fail for any reason whatsoever until the heat death of the universe". Either way, both are completely anecdotal evidence, neither can have any real conclusive evidence found due to the sample size of ONE drive, and does nothing but possibly kill the storage drive off prematurely for the sake of idiots salivating over elusive real world endurance rating numbers when in reality IT REALLY DOESN'T MATTER TO YOU.

Are you a standard home consumer? Yes.

And you're considering purchasing this drive that's designed and marketed towards home consumers (ie: this is not a data center priced or marketed product)?: Yes.

Are you using it under normal home consumer workloads (ie: you're not reading/writing hundreds of MB/s 24/7 for years on end)? Yes.

Then you have nothing to worry about. If the drive dies, then you call up/email the manufacturer and get warranty replacement for your drive. And chances are, your drives will likely be useless due to ever faster and more spacious storage options in the future than they will fail. I got a basically worthless 80GB SATA 2 (near first gen) SSD that's neither fast enough to really use as a boot drive nor spacious enough to be used anywhere else. If anything the NAND on that early model should be dead, but it's not, and chances are the endurance ratings are highly pessimistic of their actual death as seen in the ARS Technica report where Lee Hutchinson stressed SSDs 24/7 for ~18 months before they died.

eastcoast_pete - Tuesday, August 7, 2018 - link

Firstly, thanks for calling me one of the "idiots salivating over elusive real world endurance rating numbers". I guess it takes one to know one, or think you found one. Second, I am quite aware of the need to have a sufficient sample size to make any inference to the real world. And third, I asked the question because this is new NAND tech (QLC), and I believe it doesn't hurt to put the test sample that the manufacturer sends through its paces for a while, because if that shows any sign of performance deterioration after a week or so of intense use, it doesn't bode well for the maturity of the tech and/or the in-house QC.And, your last comment about your 80 GB near first gen drive shows your own ignorance. Most/maybe all of those early SSDs were SLC NAND, and came with large overprovisioning, and yes, they are very hard to kill. This new QLC technology is, well, new, so yes I would like to see some stress testing done, just to see if the assumption that it's all just fine holds, at least for the drive the manufacturer provided.

Oxford Guy - Tuesday, August 7, 2018 - link

If a product ships with a defect that is shared by all of its kind then only one unit is needed to expose it.mapesdhs - Wednesday, August 8, 2018 - link

Proof by negation, good point. :)Spunjji - Wednesday, August 8, 2018 - link

That's a big if, though. If say 80% of them do and Anandtech gets the one that doesn't, then...2nd gen OCZ Sandforce drives were well reviewed when they first came out.

Oxford Guy - Friday, August 10, 2018 - link

"2nd gen OCZ Sandforce drives were well reviewed when they first came out."That's because OCZ pulled a bait and switch, switching from 32-bit NAND, which the controller was designed for, to 64-bit NAND. The 240 GB model with 64-bit NAND, in particular, had terrible bricking problems.

Beyond that, there should have been pressure on Sandforce's decision to brick SSDs "to protect their firmware IP" rather than putting users' data first. Even prior to the severe reliability problems being exposed, that should have been looked at. But, there is generally so much passivity and deference in the tech press.

Oxford Guy - Friday, August 10, 2018 - link

This example shows why it's important for the tech press to not merely evaluate the stuff they're given but go out and get products later, after the initial review cycle. It's very interesting to see the stealth downgrades that happen.The Lenovo S-10 netbook was praised by reviewers for having a matte screen. The matte screen, though, was replaced by a cheaper-to-make glossy later. Did Lenovo call the machine with a glossy screen the S-11? Nope!

Sapphire, I just discovered, got lots of reviewer hype for its vapor chamber Vega cooler, only to replace the models with those. The difference? The ones with the vapor chamber are, so conveniently, "limited edition". Yet, people have found that the messaging about the difference has been far from clear, not just on Sapphire's website but also on some review sites. It's very convenient to pull this kind of bait and switch. Send reviewers a better product then sell customers something that seems exactly the same but which is clearly inferior.