The Intel SSD 600p (512GB) Review

by Billy Tallis on November 22, 2016 10:30 AM ESTPerformance Consistency

Our performance consistency test explores the extent to which a drive can reliably sustain performance during a long-duration random write test. Specifications for consumer drives typically list peak performance numbers only attainable in ideal conditions. The performance in a worst-case scenario can be drastically different as over the course of a long test drives can run out of spare area, have to start performing garbage collection, and sometimes even reach power or thermal limits.

In addition to an overall decline in performance, a long test can show patterns in how performance varies on shorter timescales. Some drives will exhibit very little variance in performance from second to second, while others will show massive drops in performance during each garbage collection cycle but otherwise maintain good performance, and others show constantly wide variance. If a drive periodically slows to hard drive levels of performance, it may feel slow to use even if its overall average performance is very high.

To maximally stress the drive's controller and force it to perform garbage collection and wear leveling, this test conducts 4kB random writes with a queue depth of 32. The drive is filled before the start of the test, and the test duration is one hour. Any spare area will be exhausted early in the test and by the end of the hour even the largest drives with the most overprovisioning will have reached a steady state. We use the last 400 seconds of the test to score the drive both on steady-state average writes per second and on its performance divided by the standard deviation.

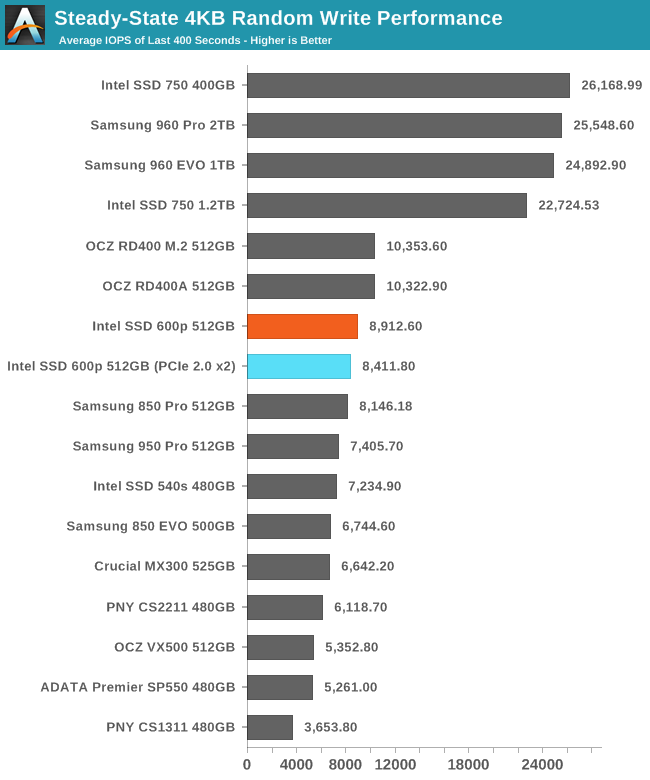

The Intel 600p's steady state random write performance is reasonably fast, especially for a TLC SSD. The 600p is faster than all of the SATA SSDs in this collection. The Intel 750 and Samsung 960s are in an entirely different league, but the OCZ RD400 is only slightly ahead of the 600p.

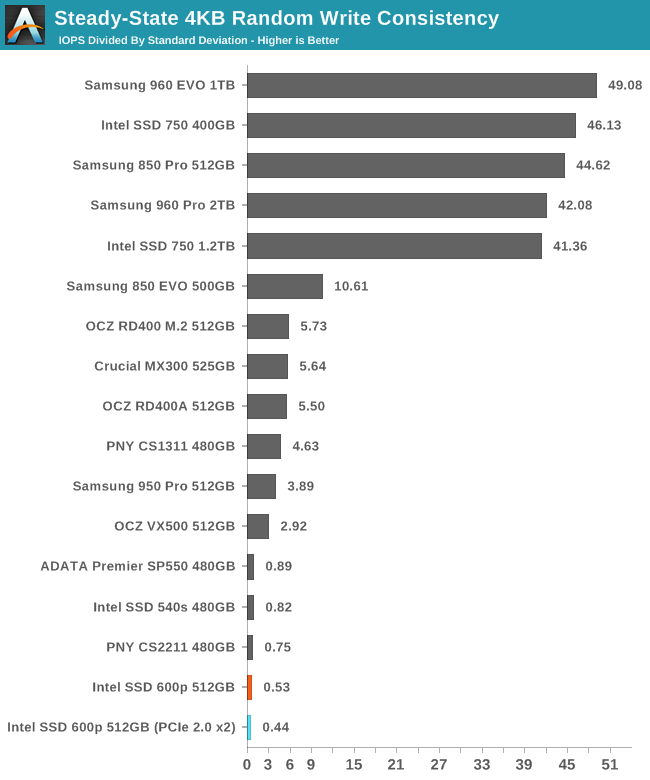

Despite a decently high average performance, the 600p has a very low consistency score, indicating that even after reaching steady state, the performance varies widely and the average does not tell the whole story.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

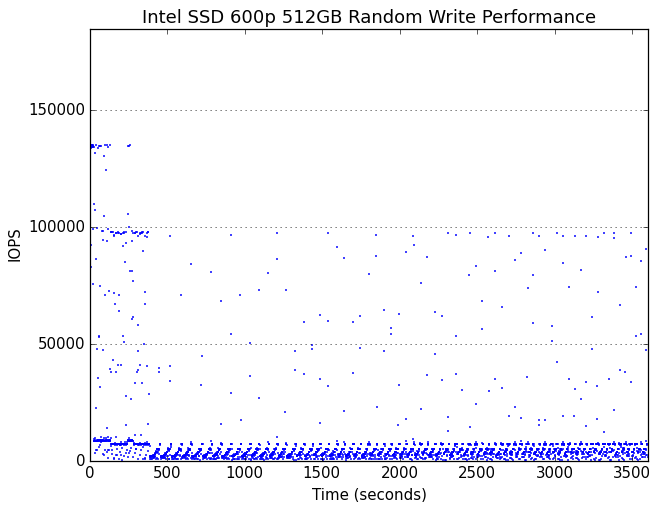

Very early in the test, the 600p begins showing cyclic drops in performance due to garbage collection. Several minutes into the hour-long test, the drive runs out of spare area and reaches steady state.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

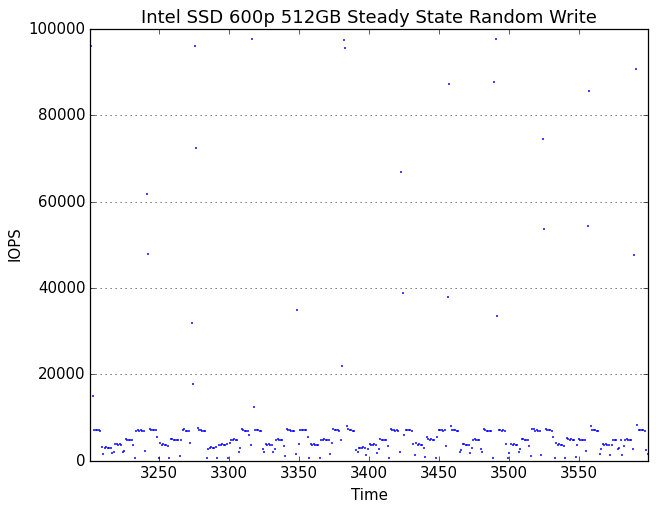

In its steady state, the 600p spends most of the time tracing out a sawtooth curve of performance that has a reasonable average but is constantly dropping down to very low performance levels. Oddly, there are also brief moments of unhindered performance where the drive spikes to exceptionally high performance of up to 100k IOPS, but these are short and infrequent enough to have little impact on the average performance. It would appear that the 600p occasionally frees up some SLC cache, which then immediately gets used up and kicks off another round of garbage collection.

With extra overprovisioning, the 600p's garbage collection cycles don't drag performance down as far, making the periodicity less obvious.

63 Comments

View All Comments

vFunct - Tuesday, November 22, 2016 - link

These would be great for server applications, if I could find PCIe add-in cards that have 4x M.2 slots.I'd love to be able to stick 10 or 100 or so of these in a server, as an image/media store.

ddriver - Tuesday, November 22, 2016 - link

You should call intel to let them know they are marketing it in the wrong segment LOLddriver - Tuesday, November 22, 2016 - link

To clarify, this product is evidently the runt of the nvme litter. For regular users, it is barely faster than sata devices. And once it runs out of cache, it actually gets slower than a sata device. Based on its performance and price, I won't be surprised if its reliability is just as subpar. Putting such a device in a server is like putting a drunken hobo in a Lamborghini.BrokenCrayons - Tuesday, November 22, 2016 - link

Assuming a media storage server scenario, you'd be looking at write once and read many where the cache issues aren't going to pose a significant problem to performance. Using an array of them would also mitigate much of that write performance using some form of RAID. Of course that applies to SATA devices as well, but there's a density advantange realized in NVMe.vFunct - Tuesday, November 22, 2016 - link

bingo.Now, how can I pack a bunch of these in a chassis?

BrokenCrayons - Tuesday, November 22, 2016 - link

I'd think the best answer to that would be a custom motherboard with the appropriate slots on it to achieve high storage densities in a slim (maybe something like a 1/2 1U rackmount) chassis. As for PCIe slot expansion cards, there's a few out there that would let you install 4x M.2 SSDs on a PCIe slot, but they'd add to the cost of building such a storage array. In the end, I think we're probably a year or three away from using NVMe SSDs in large storage arrays outside of highly customized and expensive solutions for compaines that have the clout to leverage something that exotic.ddriver - Tuesday, November 22, 2016 - link

So are you going to make that custom motherboard for him, or will he be making it for himself? While you are at it, you may also want to make a cpu with 400 pcie lanes so that you can connect those 100 lousy budget p600s.Because I bet the industry isn't itching to make products for clueless and moneyless dummies. There is already a product that's unbeatable for media storage - an 8tb ultrastar he8. As ssd for media storage - that makes no sense, and a 100 of those only makes a 100 times less sense :D

BrokenCrayons - Tuesday, November 22, 2016 - link

"So are you going to make that..."Sure, okay.

Samus - Tuesday, November 22, 2016 - link

ddriver, you are ignoring his specific application when judging his solution to be wrong. For imaging, sequential throughput is all that matters. I used to work part time in PC refurbishing for education and we built a bench to image 64 PC's at a time over 1Gbe with a dual 10Gbe fiber backbone to a server using, which was at the time the best option on the market, an OCZ RevoDrive PCIe SSD. Even this drive was crippled by a single 10Gbe connection let alone dual 10Gbe connections, which is why we eventually installed TWO of them in RAID 1.This hackjob configuration allowed imaging 60+ PC's simultaneously over GBe in about 7 minutes when booting via PXE, running a diskpart script and imagex to uncompress a sysprep'd image.

The RevoDrive's were not reliable. One would fail like clockwork almost annually, and eventually in 2015 after I had left I heard they fell back to a pair of Plextor M2 2280's in a PCIe x4 adapter for better reliability. It was, and still is, however, very expensive to do this compared to what the 600p is offering.

Any high-throughput sequential reading application would greatly benefit from the performance and price the 600p is offering, not to mention Intel has class leading reliability in the SSD sector of 0.3%/year failure rate according to their own internal 2014 data...there is no reason to think of all companies Intel won't keep reliability as a high priority. After all, they are still the only company to mastermind the Sandforce 2200, a controller that had incredibly high failure rates across every other vendor and effectively lead to OCZ's bankruptcy.

ddriver - Tuesday, November 22, 2016 - link

So how does all this connect to, and I quote, "stick 10 or 100 or so of these in a server, as an image/media store"?Also, he doesn't really have "his specific application", he just spat a bunch of nonsense he believed would be cool :D

Lastly, next time try multicasting, this way you can simultaneously send data to 64 hosts at 1 gbps without the need for dual 10gbit or an uber expensive switch, achieving full parallelism and an effective 64 gbps. In that case a regular sata ssd or even an hdd would have sufficed as even mechanical drives have no problem saturating the 1 gbps lines you to the targets. You could have done the same work, or even better, at like 1/10 of the cost. You could even do 1000 system at a time, or as many as you want, just daisy chain more switches, terabit, petabit effective cumulative bandwidth is just as easily achievable.